Most employee surveys produce polished lies. People rate their managers a 7 out of 10 when they mean a 4, skip the open-ended questions entirely, or write something safe enough to sound engaged without actually saying anything. The survey closes, results get summarized, leadership sees mostly neutral data, and nothing changes.

The fix is not better questions. It is removing the fear that makes people hedge in the first place. That is what anonymous surveys do when they are set up correctly.

This guide covers what genuine anonymity requires technically, when it matters most, how to design surveys that do not accidentally defeat their own purpose, and what to do with the results afterward.

What makes a survey genuinely anonymous

An anonymous survey is one where no one, including survey administrators, can connect a response to the person who submitted it. That distinction matters because many surveys described as "anonymous" are not.

A survey is truly anonymous only when all of the following apply: the survey uses a shared link rather than personalized invitations sent to named individuals; the platform does not log IP addresses, device identifiers, or session data; administrators can only see aggregated results, not individual responses; no demographic combination in the survey is narrow enough to identify a specific person; and the tool does not require participants to log in or create an account before responding.

If any of those conditions fail, participants are right to be skeptical. Employees have good instincts about traceability. A survey that promises anonymity but sends personalized email links, for example, will not produce honest responses no matter what the intro text says.

Confidential surveys are different. A confidential survey collects identifying information but restricts who can see it. HR may know who said what; the respondent's manager does not. This is useful for follow-up purposes, but it does not produce the same candor as true anonymity, particularly on topics involving management.

Why it matters: the psychology of honest feedback

Fear of consequences is the primary reason surveys fail. When an employee thinks a negative response could affect their relationship with their manager, their performance review, or their standing on the team, they self-censor. This happens even when the organization has no intention of retaliating. The perception of risk is enough.

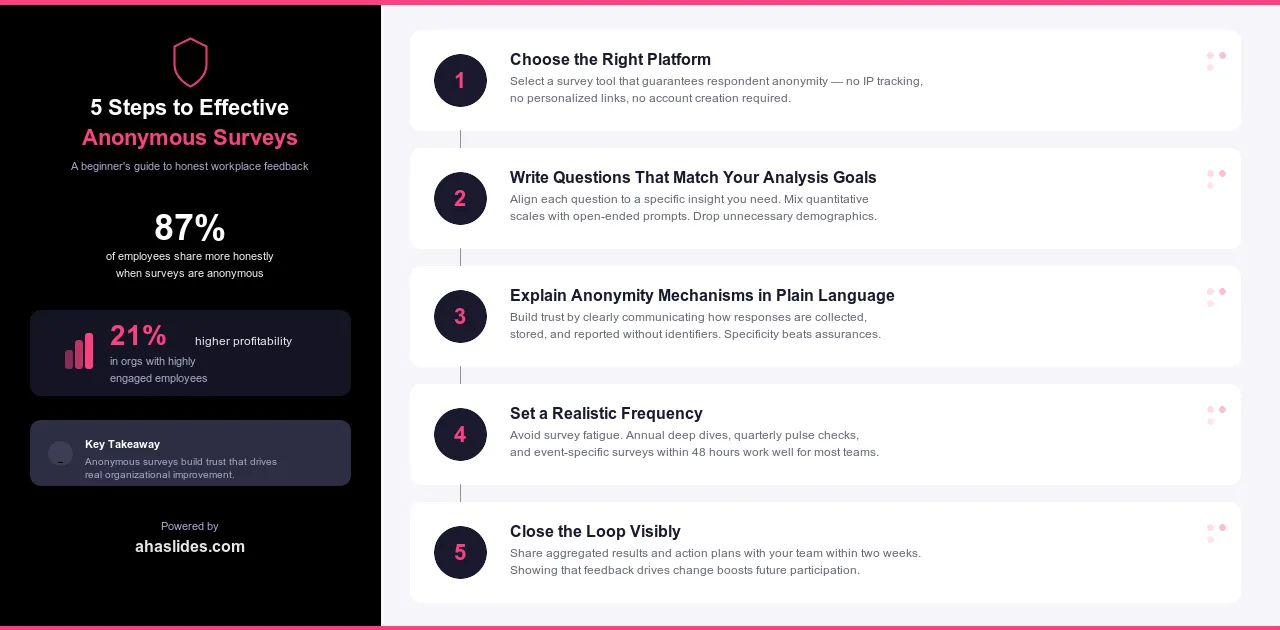

Research from the American Psychological Association found that around 87% of employees feel more comfortable sharing honest feedback when surveys are anonymous [1]. That comfort translates into higher completion rates, more specific responses, and willingness to raise issues that never surface in named surveys: management practices, workload imbalances, discrimination, pay dissatisfaction, and cultural problems.

Organizations with highly engaged employees show 21% higher profitability and 17% higher productivity than less engaged counterparts, according to Gallup's long-running meta-analysis of workplace data [2]. Anonymous surveys are one of the mechanisms that make genuine engagement measurable and improvable.

The other benefit is eliminating social desirability bias. Without anonymity, respondents tend to answer in ways they think reflect well on them or match what they assume the organization wants to hear. Anonymous surveys reduce that effect substantially.

When to use anonymous surveys

Anonymity is not always necessary. A survey asking employees to rate the catering at an office event does not need strong anonymity protections. But in the following contexts, it is hard to get reliable data without it: employee engagement and satisfaction surveys, training and L&D evaluations, questions covering sensitive workplace topics, and event or conference feedback where candid minority opinions are the most useful data point.

Each of these contexts shares the same dynamic: participants have a reason to self-censor when identified, and that self-censorship is exactly what produces the polished, neutral data that tells you nothing useful.

Employee engagement and satisfaction

This is the most common use case. Engagement surveys covering management quality, compensation, career development, inclusion, and psychological safety all touch on topics where employees have strong reasons to shade their responses when identified. Anonymous surveys surface the real distribution of sentiment, not the version employees think is safe to share.

A mid-sized technology company ran named pulse surveys for two years and saw consistently high satisfaction scores. After switching to anonymous surveys through AhaSlides, the first round surfaced widespread concerns about a specific team's management practices that had never appeared in previous results. Three managers received additional coaching and support.

Training and L&D evaluation

Trainers have a professional stake in their sessions going well, which creates social pressure on participants to give positive feedback. An L&D professional evaluating her own workshop should expect inflated scores on a named survey. Anonymous post-training evaluations produce more accurate data on what content landed, what confused people, and whether participants actually expect to apply what they learned.

This is especially true for mandatory compliance training, where participants may have strong negative views they will not voice when identified.

Sensitive topics

Workplace harassment, discrimination, mental health, substance abuse, and similar topics require anonymity for any meaningful data collection. Even a perception that responses could be traced back makes participation rates collapse and produces heavily filtered answers from those who do respond.

Event and conference feedback

Attendees are more candid about speakers, session quality, and logistics when they know the feedback is anonymous. For conference organizers trying to improve future events, the honest minority opinion, the session that bored people, the keynote that ran long, is often the most valuable data point.

Designing surveys that do not compromise their own anonymity

Technical anonymity can be undermined at the question level. These are the most common mistakes.

The first risk is demographic questions in small teams. If you ask for department, role, and tenure in a 12-person team, you may narrow a response down to one or two people. Only include demographics that are genuinely necessary for analysis, and make sure the categories are broad enough that no single combination identifies an individual.

The second is open-ended questions with situation-specific prompts. Asking 'describe a specific recent incident where you felt unsupported' invites responses that contain enough detail to identify the respondent. A better approach: ask 'how often do you feel unsupported in your role?' as a rating, then offer an optional open field with a note to avoid including specific dates, names, or events.

Questions that only apply to a small group create the same problem. If one team of three recently went through a leadership change and you ask all employees about recent leadership transitions, the responses from that group are effectively identifiable.

Finally, timing and routing. Conditional logic that sends different respondents through different question branches can sometimes allow administrators to infer who saw which path. Keep branching logic simple or eliminate it entirely in small-group surveys.

Step-by-step implementation

1. Choose the right platform

Evaluate platforms on these specifics: Does it suppress IP tracking? Does access require a personal login or just a shared link? Can administrators view individual responses? What data retention and deletion policies apply?

AhaSlides enables genuinely anonymous participation through shared QR codes and links that do not track individual access. Administrators see only aggregated results, and participants do not create accounts.

2. Write questions that match your analysis goals

Decide in advance what you will do with the results. If you need to compare engagement across departments, you need department as a demographic. If you just need an overall picture, drop the demographic questions entirely. Every question should have a clear decision it informs.

Use rating scales and multiple-choice questions as the default. They are easier to analyze, harder to accidentally de-anonymize, and faster to complete.

3. Explain the anonymity mechanisms in plain language

"This survey is anonymous" is not enough. Employees have heard that before and remain skeptical. Explain specifically: "This survey uses a shared link, not personalized invitations. We cannot see who submitted which response. Only aggregated results are visible to administrators."

Acknowledge the common worries directly: writing style identification, submission timing, IP tracking. Explain what protections are in place. Credibility comes from specificity, not assurances.

4. Set a realistic frequency

Annual comprehensive surveys (20-30 questions) work well for deep engagement assessments. Quarterly pulse surveys (5-10 questions) maintain visibility without burning people out. Event-specific surveys should go out within 24-48 hours while the experience is fresh.

The main error is over-surveying. If people receive anonymous surveys every few weeks, they start completing them quickly and carelessly, which defeats the purpose. Quality of response matters more than volume.

5. Close the loop visibly

Anonymous feedback produces resentment, not improvement, when it disappears into a report nobody acts on. Share a summary of themes and findings with all participants within two weeks of the survey closing. When you make changes based on the results, say so explicitly and connect the change to the feedback.

When you cannot act on something, explain why. "We heard that the commuting stipend is insufficient. We are not able to increase it this year due to budget constraints, but we have raised it as a priority for next year's planning cycle" is more trust-building than silence.

Common mistakes to avoid

Even well-intentioned anonymous surveys can fall short when the execution overlooks a few recurring problems.

The first is promising anonymity on a platform that cannot deliver it. Some tools marketed as anonymous still log IP addresses or require email-based access. Before launching a survey, verify the platform's data collection practices in its privacy documentation, not just its marketing copy. If the tool sends each participant a unique link, that is a confidential survey, not an anonymous one.

The second is sharing results with groups too small to protect. A team of five people does not need to see its own breakdown by tenure level. When sharing results back to subgroups, set a minimum threshold of ten or more respondents before displaying data for that segment. If a subgroup falls below the threshold, roll those responses into a broader category or report only at the aggregate level.

Skipping the results debrief entirely is the third. One of the fastest ways to kill future participation is to collect feedback and go silent. Employees draw their own conclusions when they hear nothing. If results are still being analyzed, send a brief update acknowledging that the survey closed, how many responses came in, and when people can expect to hear back.

Finally, treating every survey as a one-off. Organizations that run a single anonymous survey after a difficult period and then revert to named feedback cycles lose the trust they built. Anonymous surveys work best as part of a consistent listening strategy, not as a crisis response. When employees see that anonymity is the standard approach rather than an exception, participation rates and candor improve over time.

Frequently asked questions

Can management figure out who said what, even in a genuinely anonymous survey?

With a properly configured platform, no. When a survey uses a shared link rather than personalized invitations, and the tool does not log IP addresses or session data, there is no technical record linking a response to a device or person. The only risk is self-identification through unusually specific write-in answers. Remind participants in the survey introduction to keep open-ended responses general rather than citing specific dates, names, or events.

What is the minimum group size for an anonymous survey to be safe?

Most practitioners set a floor of eight to ten respondents before reporting results for any segment. Below that threshold, even multiple-choice data can be traced back in context. For sensitive topics, some organizations raise the minimum to fifteen. If your team is smaller than the threshold, consider combining results with another comparable group or reporting only at the organization-wide level.

How do you get employees to actually trust that the survey is anonymous?

Trust is earned through repeated behavior, not one-time assurances. In the short term, be specific about the technical setup in the survey introduction. In the longer term, follow through consistently: share results promptly, act on what you can, explain what you cannot change, and never reference individual-sounding feedback in management conversations. Employees notice when anonymous data appears to have informed a targeted response, and they adjust their future answers accordingly.

Running anonymous surveys with AhaSlides

For HR teams and L&D professionals who run surveys during live sessions or asynchronously, AhaSlides handles both modes. Participants join through a shared code without creating accounts. Rating scales, multiple-choice questions, and open-ended fields are all available. Results appear in real time for facilitators, aggregated only, so you can discuss findings with a group while the session is still running.

For post-training evaluations in particular, reviewing anonymous results together at the end of a workshop produces a different kind of conversation than sending a survey link after everyone has gone home. Participants see the group's honest distribution, not the version that people put their names on.

Sources

[1] American Psychological Association research, as cited by DeskAlerts: https://www.alert-software.com/blog/anonymous-employee-survey

[2] Gallup. "The Relationship Between Engagement at Work and Organizational Outcomes." Meta-analysis of Q12 research. https://www.gallup.com/workplace/349484/state-of-the-global-workplace.aspx